Docker Server Configuration Guide

Docker server configuration is the first question serious engineers ask before they move containers from a laptop to production. A container host is not just a place to run images. It is a resource scheduler, a filesystem pressure point, a network boundary, and a fault domain. On a site focused on US infrastructure, that matters even more, because latency patterns, traffic direction, remote administration, hosting, and colocation strategy all shape how a container platform behaves under load. If the base server is undersized or badly balanced, the problem rarely appears as a clean failure. It usually arrives as noisy neighbors, slow builds, stalled writes, log growth, packet drops, and sudden process eviction.

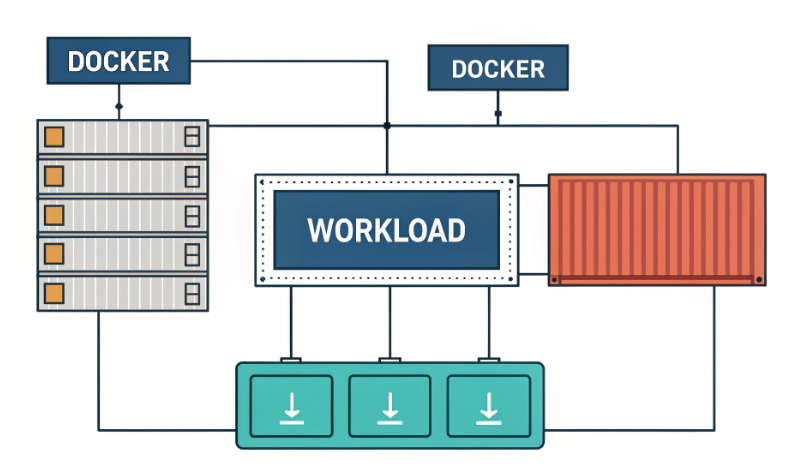

At a technical level, Docker depends on Linux kernel primitives such as namespaces, cgroups, and layered storage. Official documentation also highlights practical host considerations, including supported Linux architectures, storage driver behavior, and resource controls for CPU and memory. Modern deployments therefore need more than “enough resources.” They need the right resource profile, the right filesystem behavior, and operational headroom for image pulls, writable layers, logs, and sidecar services.

Why Docker host sizing is different from ordinary server sizing

A classic application server often maps one operating environment to one workload. A Docker host does not. It may run a reverse proxy, one or more APIs, a background worker set, telemetry agents, scheduled jobs, and internal tooling at the same time. Even if each container is light, the aggregate footprint is rarely light. Layered images consume storage differently from traditional package installs, and copy-on-write behavior means write-heavy applications can turn a modest host into an I/O bottleneck faster than many teams expect. Official guidance around storage drivers and backing filesystems reinforces that the host filesystem is not a minor detail. It directly influences compatibility and runtime characteristics.

- CPU pressure comes from concurrent services, builds, compression, encryption, and burst traffic.

- Memory pressure comes from application heaps, page cache, orchestration agents, and log pipelines.

- Storage pressure comes from images, writable layers, volumes, and accumulated logs.

- Network pressure comes from east-west traffic, ingress, egress, and observability exports.

That is why container host planning should start from workload shape, not from a generic server template.

CPU: prioritize concurrency over headline clocks

For most Docker workloads, CPU planning is about sustained concurrency rather than raw peak frequency. Containers share the host scheduler, and the runtime can enforce CPU limits through cgroups. In practice, a host with too little compute headroom develops a queueing problem: application requests wait, build jobs drift, health checks timeout, and restart loops become more common. Docker’s own resource controls show that CPU allocation is flexible, but flexibility does not create capacity. It only divides what already exists.

- For test and lab environments, prioritize predictable behavior over density. You want room for shells, package updates, temporary build steps, and debugging tools.

- For web application stacks, plan for bursts. Reverse proxies, app runtimes, and background workers can all spike at the same time.

- For microservices, count scheduler overhead. More services mean more context switching, more health checks, and more noisy contention.

- For CI-style workloads, budget extra compute for image builds, archive operations, and dependency resolution.

A useful geek rule is simple: if your host spends too much time near saturation, every container becomes less deterministic. Stable container platforms are built on spare cycles.

Memory: the resource that fails messily

Memory is often the true limiting factor on a Docker server. CPU starvation degrades performance, but memory starvation can trigger abrupt failure. Docker documentation notes that when the kernel runs short on memory, the system may invoke the out-of-memory killer. In a busy container host, that means a process may disappear long before users understand why.

Engineers should treat RAM as a pool shared by more than application containers. The host also needs memory for:

- filesystem cache

- container runtime overhead

- monitoring and log shippers

- security tooling

- temporary build layers

- package manager operations

If your applications are garbage-collected, memory planning becomes even trickier. A service may look calm at idle and expand sharply during traffic bursts or batch work. Datastores and caches inside containers raise the stakes further because they compete with the rest of the stack for the same host memory. For that reason, production hosts should be sized with failure margins, not with optimistic averages.

Storage: where container theory meets operational reality

Storage is where many Docker deployments become unexpectedly fragile. Images, writable layers, logs, and persistent volumes all consume disk in different ways. Docker documentation recommends storage driver and backing filesystem combinations carefully, and it warns against manually editing runtime data under the daemon path. Official material also points out that layered storage uses copy-on-write techniques, which can behave very differently from direct writes on a traditional host layout.

For a practical Docker server, storage planning should answer four questions:

- Where will images live?

- Where will logs grow?

- Which data must survive container replacement?

- How much random write pressure will the workload generate?

Fast storage matters because containers tend to amplify metadata activity. Pulls, unpacking, layer creation, package installation during builds, and log rotation all create small operations that punish weak disks. Teams that focus only on capacity often miss the bigger issue: latency under mixed read-write pressure.

- Use a filesystem and storage driver combination that is known to work well with modern Docker deployments.

- Separate persistent application data from ephemeral container layers whenever possible.

- Watch inode consumption as carefully as free space.

- Rotate logs before they become your largest hidden volume.

In short, a Docker host does not merely need “more disk.” It needs disk behavior aligned with how containers read, write, and rebuild state.

Network and bandwidth: think in flows, not in port counts

Many buyers ask what bandwidth a Docker server needs, but a better question is how traffic flows through the host. A single machine may handle public ingress, internal service calls, artifact pulls, outbound API requests, metrics export, and backup traffic. On US infrastructure, this becomes especially relevant when the audience is distributed across regions or when engineering teams manage the platform remotely from another geography.

When evaluating network requirements, focus on these patterns:

- North-south traffic: user requests entering and leaving the host.

- East-west traffic: container-to-container or node-to-node communication.

- Control traffic: registries, package mirrors, update channels, and orchestration signals.

- Operations traffic: backups, telemetry, and remote shell access.

For public services, network stability often matters more than peak throughput. Engineers running APIs, gateways, or event-driven services should also remember that packet loss, jitter, or firewall misconfiguration can look like random application instability. Official installation guidance for Linux additionally notes firewall considerations, which is a reminder that container networking is partly an operating system design problem, not just an application setting.

Operating system and kernel expectations

Docker can run in several environments, but Linux remains the natural home for serious container hosting. Official documentation for the engine and related components consistently centers Linux kernel features, supported architectures, storage drivers, cgroup behavior, and namespace-based isolation.

A good container host operating system should provide:

- a current and stable kernel line

- clean support for cgroups and namespaces

- predictable firewall tooling

- mature package maintenance

- long-lived security updates

The practical takeaway is simple. Pick a Linux environment that your team can patch, audit, and automate confidently. Container reliability is strongly correlated with host hygiene.

Security features that affect configuration choices

Engineers sometimes treat security as an add-on after the host is online. That is a mistake. Security posture changes configuration requirements from the beginning. Features such as user namespace isolation, rootless operation, stricter daemon settings, and segmented firewall policy all affect compatibility, troubleshooting, and performance tradeoffs. Docker documentation includes dedicated guidance for user namespace remapping and rootless mode, making it clear that host design and privilege boundaries should be planned early.

- Decide whether containers need elevated privileges at all.

- Separate control-plane access from application traffic.

- Restrict image sources and watch supply-chain paths.

- Limit writable areas and review bind mounts carefully.

A server built for containers should not only run workloads. It should constrain them.

Configuration planning by workload type

Not every Docker host needs the same shape. The right configuration depends on workload behavior more than on the number of containers alone.

- Development and testing: favor flexibility, snapshots, and quick rebuild cycles.

- Static and light dynamic sites: prioritize network cleanliness and simple observability.

- API services: reserve headroom for bursts, queue workers, and structured logging.

- Data-heavy applications: emphasize memory discipline and storage latency.

- Microservice clusters: budget for service discovery, telemetry, and sidecar overhead.

If your environment may grow, choose a server profile that supports clean migration. Container platforms are easy to start and expensive to resize badly.

Common failure signs of an undersized Docker server

Most misconfigured hosts do not fail all at once. They degrade through a repeating pattern of weak signals:

- containers restart without obvious application errors

- image pulls and builds become slow or inconsistent

- log writes stall during traffic bursts

- disk fills unexpectedly because old layers accumulate

- health checks fail only under concurrency

- latency climbs even when application code has not changed

When you see these symptoms together, the issue is often infrastructure shape rather than code quality. That is why observability should begin at the host level: CPU steal, memory pressure, filesystem wait, and network retransmits tell a more honest story than container uptime alone.

A practical checklist before you choose hosting or colocation

Whether you deploy through hosting or build around colocation, evaluate the Docker host with a systems mindset.

- Map every planned container and classify it as stateless, stateful, or operational.

- Estimate write intensity, not just total data volume.

- Reserve memory for the host and for non-application agents.

- Confirm filesystem compatibility with the intended container storage model.

- Define log retention and cleanup policy before launch.

- Test failure behavior under constrained CPU and memory conditions.

- Verify firewall and network path assumptions with real traffic patterns.

This checklist is boring in the best possible way. Boring infrastructure is exactly what production containers need.

Conclusion

Docker server configuration is not about chasing a single perfect template. It is about matching CPU concurrency, memory headroom, storage behavior, kernel support, and network stability to the reality of your workloads. For technical teams using US infrastructure, the smartest approach is to treat the container host as a performance boundary and an isolation boundary at the same time. Build for noisy bursts, plan for disk and log growth, respect kernel-level constraints, and keep enough room for the host to breathe. If you do that, your Docker platform will be easier to scale, easier to debug, and far less likely to fail in weird ways at the worst possible moment.