How to Do Data Lifecycle Management

In modern infrastructure, data lifecycle management is no longer a policy document that sits in a wiki and collects dust. It is an operational discipline that decides how data is ingested, tagged, stored, replicated, queried, archived, restored, and finally destroyed. For engineering teams running customer workloads, internal platforms, or regional services on Japan hosting, the challenge is not whether data exists, but whether every byte has a defined state, owner, retention rule, and recovery path.

The core idea is simple: data should not be treated as a flat blob. It changes temperature, value, risk, and access pattern over time. Operational telemetry is hot for minutes, transaction records may stay active for months, compliance logs can become cold but must remain searchable, and expired records must be disposed of in a verifiable way. Security agencies and technical guidance bodies consistently emphasize asset inventory, tested backups, separation of backup storage, encryption, and recovery readiness as baseline resilience measures. Those principles map directly to lifecycle design in production environments.

Why Data Lifecycle Management Matters in Real Systems

Technical teams usually feel the need for lifecycle controls long before management gives it a name. Storage cost drifts upward. Query latency gets worse because old and new records live in the same tier. Backup windows expand. Recovery objectives become fuzzy. A single retention mistake can also create legal, privacy, or incident-response problems. In distributed environments, especially those serving users across East Asia, the infrastructure layer must balance low latency, durability, and controlled data movement.

A good lifecycle model solves several problems at once:

- It reduces waste by matching storage class to actual access frequency.

- It improves reliability by defining backup and restore behavior before failure happens.

- It lowers security exposure by shrinking the amount of stale or overexposed data.

- It supports audits because retention and deletion are based on rules, not guesswork.

- It makes scaling easier because datasets are segmented by purpose and age.

In plain engineering terms, lifecycle management is the difference between “we have data somewhere” and “we know what this dataset is, why it exists, who can touch it, how long it stays online, and how fast we can recover it.”

The Stages of a Practical Data Lifecycle

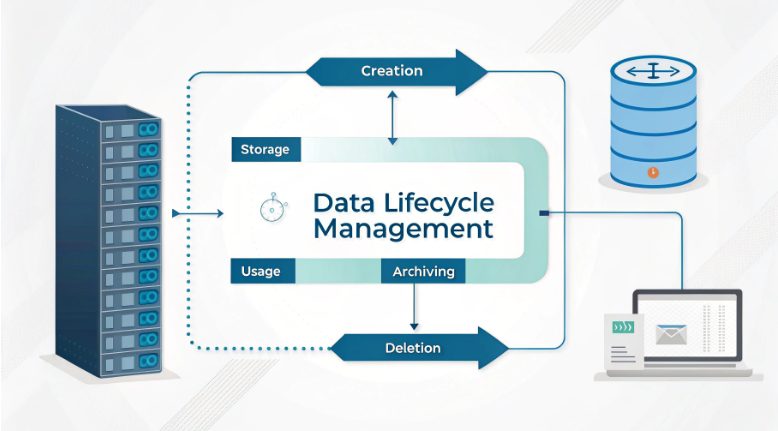

Most teams benefit from modeling the lifecycle as a pipeline rather than a static repository. The exact labels vary, but the mechanics are usually consistent.

- Creation or ingestion: data enters the environment from applications, APIs, users, devices, or batch imports.

- Classification: the dataset is labeled by sensitivity, business value, retention need, and access profile.

- Active storage and use: data lives in the performance tier required by production systems and analytics jobs.

- Protection: backups, snapshots, replication, checksums, and access controls are applied.

- Archival: aging data moves to lower-cost media while preserving integrity and discoverability.

- Recovery and validation: restore workflows are tested so that recovery claims are measurable.

- Disposal: expired or invalid data is deleted or destroyed according to policy and technical controls.

What matters is not the wording but the state transitions. If a dataset can move from hot to cold storage, there must be a trigger. If it can be deleted, there must be a retention rule. If it is backed up, there must be a restore test. Lifecycle design fails when stages exist only in diagrams and not in automation.

How to Build a Data Lifecycle Strategy

The fastest way to fail is to start with tools instead of inventory. Begin by mapping the data surface area of the environment. That means identifying what exists, where it lives, how fast it grows, who owns it, which workloads depend on it, and what would break if it vanished. Official cyber guidance repeatedly starts with asset inventory for a reason: you cannot protect, archive, or retire what you do not know exists.

A useful implementation sequence looks like this:

- Inventory data assets across databases, object stores, logs, block volumes, backups, and exports.

- Classify data by confidentiality, integrity sensitivity, retention period, and access pattern.

- Define storage tiers for hot, warm, and cold datasets.

- Set retention rules for each class based on technical and governance needs.

- Automate movement between tiers using age, event, or usage thresholds.

- Test backup and restore on a schedule that matches recovery objectives.

- Enforce deletion with logs, approval paths, and proof of execution.

This sequence is intentionally boring, and that is a feature. Lifecycle work should be deterministic. The more it depends on heroic manual effort, the more likely it is to drift.

Classify First, Store Second

Engineers often overfocus on where data sits and underfocus on what the data actually is. Classification must come before storage optimization. A clean taxonomy prevents expensive mistakes, such as keeping sensitive records in broad-access analytics buckets or storing low-value historical logs in premium media forever.

A simple technical classification scheme can include:

- Sensitivity: public, internal, confidential, restricted.

- Availability need: mission-critical, important, noncritical.

- Retention horizon: short-term, medium-term, long-term.

- Temperature: hot, warm, cold, frozen.

- Mutability: mutable, append-only, immutable.

Once data classes are stable, storage decisions become easier. High-frequency transaction records may need low-latency access and aggressive replication. Compliance snapshots may be immutable and cold. Telemetry might start hot and roll down quickly after aggregation. Classification also improves access control, because permission models can be bound to data type instead of improvised on each system.

Storage Tiers, Archive Design, and Cost Control

Lifecycle management is where infrastructure economics meets architecture. If all data stays in the highest-performance tier, the platform becomes needlessly expensive. If cold data is archived too aggressively, queries and investigations become painful. The goal is not cheapest storage; it is correct storage.

A practical tiering model usually includes:

- Hot tier for active databases, current objects, and low-latency reads.

- Warm tier for recent but less frequently accessed records.

- Cold tier for historical data that must remain available with slower retrieval.

- Archive tier for long-term retention with integrity guarantees and minimal cost footprint.

Archive design should answer four technical questions:

- How is data indexed after archival?

- How long does retrieval take under normal and emergency conditions?

- How is integrity validated over time?

- What event causes final disposal?

Teams using regional infrastructure in Japan often apply this model to support low-latency production traffic in Asia while keeping historical records segregated from active workloads. The location helps performance strategy, but the lifecycle still depends on policy and automation, not geography alone.

Backup, Recovery, and the Difference Between Copies and Resilience

One of the biggest lifecycle mistakes is equating backup with recovery. A backup file is not proof of resilience. Technical guidance from cybersecurity authorities consistently recommends backing up critical systems on a regular cadence, storing backups separately from source systems, and testing recovery. That last part matters most. If restore procedures are untested, the backup is a hypothesis.

Strong backup design should include:

- Documented recovery point objective and recovery time objective.

- Isolation between primary systems and backup repositories.

- Encryption for data at rest and in transit.

- Offline, immutable, or otherwise tamper-resistant copies for critical datasets.

- Periodic restore drills against realistic failure scenarios.

- Versioning for both data and configuration artifacts.

Recovery planning must cover more than user data. Rebuild images, infrastructure definitions, access policies, secrets rotation plans, and service dependencies should also be mapped. In a serious incident, restoring tables without restoring the operating context leads to a long outage with a short checklist.

Security Controls Across the Lifecycle

Data lifecycle management is inseparable from security engineering. Every stage adds a different attack surface. Ingestion can accept poisoned or malformed input. Active storage can expose broad permissions. Archives can become forgotten shadow datasets. Disposal can fail, leaving supposedly deleted records recoverable.

Security controls should be state-aware:

- At ingestion: validate source, schema, and trust boundary.

- At storage: encrypt, segment, and minimize privilege.

- At use: log access, enforce role scope, and watch for anomalous reads.

- At backup: separate credentials, lock down deletion paths, and verify integrity.

- At archive: preserve chain of custody and searchable metadata.

- At disposal: apply secure erase or cryptographic destruction where appropriate.

For technical teams, the most important mindset shift is this: old data is rarely harmless data. A stale dataset may have low business value but high breach value. Retention without purpose increases risk density.

Automation, Observability, and Policy as Code

Manual lifecycle operations do not scale. If engineers have to remember when to archive tables, rotate snapshots, or purge expired records, drift is guaranteed. Mature environments define lifecycle rules as code and bind them to telemetry. That allows the platform to react to dataset age, legal hold flags, replication status, and cost thresholds in a repeatable way.

Good automation patterns include:

- Tagging datasets at creation time.

- Applying retention and movement rules through scheduled jobs or event triggers.

- Generating audit logs for every state transition.

- Alerting on backup failures, orphaned datasets, and overdue deletions.

- Publishing dashboards for growth rate, restore test success, and archive retrieval latency.

Observability closes the loop. Without metrics, lifecycle policy becomes faith-based administration. At minimum, teams should know dataset growth, backup age, restore success rate, retention exceptions, and archive recall time.

Why Japan-Based Infrastructure Can Support Lifecycle Goals

For organizations serving users in Japan or nearby regions, Japan-based server hosting can support lifecycle design in practical ways. Lower regional latency helps production tiers. Stable network paths improve replication and backup jobs. Regional placement can also simplify data segmentation strategies for workloads that need operational proximity to East Asian users. None of that replaces lifecycle policy, but it can make implementation cleaner.

Common deployment patterns include:

- Keeping hot application data close to regional users for low-latency access.

- Using separate storage domains for active, backup, and archive states.

- Isolating internal analytics from customer-facing production datasets.

- Designing retention and disposal workflows that match jurisdictional and business rules.

The same logic applies whether the operating model uses hosting or colocation. What matters is whether the environment supports segmentation, encryption, backup isolation, observability, and disciplined retention workflows.

Common Failure Modes and How to Avoid Them

Many lifecycle projects fail in familiar ways. The systems are usually modern; the process model is not.

- No inventory: unknown datasets keep growing outside policy.

- No restore testing: backup success reports hide recovery failure.

- No deletion workflow: expired data accumulates indefinitely.

- Flat storage design: all data stays in one expensive or unsafe tier.

- Weak access boundaries: archived or backup data becomes an easy target.

- Human-only operations: lifecycle tasks are inconsistent and undocumented.

The fix is rarely a new platform. It is usually tighter data ownership, better classification, tested recovery, and less improvisation.

Conclusion

The best version of data lifecycle management is not flashy. It is visible in clean inventories, explicit retention rules, measurable recovery, cost-aware storage tiers, and secure disposal that leaves an audit trail. For technical teams running regional workloads, especially on Japan-based infrastructure, the advantage comes from treating data as a state machine instead of a passive asset. When each dataset has a defined lifecycle, storage becomes cheaper, recovery becomes faster, and operations become far less fragile.