How to Safely Deploy GitHub Open Source on Servers

Pulling code from a public repository and pushing it into production feels natural to many engineers, but safe GitHub open source deployment is not a copy-and-run exercise. The moment a project leaves a sandbox and lands on your own hosting stack, it becomes part of your attack surface, your operational burden, and your incident response scope. For technical readers who care about reproducibility, isolation, and clean failure domains, the right question is not “does it work?” but “what exactly am I trusting, exposing, and inheriting?”

That framing matters because public code is never just code. It is source files, build logic, dependency trees, scripts, image layers, default credentials, undocumented assumptions, and maintainer habits. Security guidance from standards bodies and application security communities consistently emphasizes supply chain review, least privilege, and secure defaults as baseline controls rather than optional polish. In practical terms, that means you should verify what the project does, minimize what it can touch, and avoid granting broad access just because a setup guide asks for it.

Why You Should Never Deploy a Public Repository Blindly

Open source does not mean automatically reviewed, and popularity does not guarantee sane operational behavior. A repository can be well intentioned yet still unsafe for production because it was built as a demo, a lab tool, or a single-user utility. Some projects ship with permissive startup scripts, broad file access, development secrets in examples, or install instructions that assume a disposable machine. Those shortcuts are survivable on a local test box and dangerous on a long-lived internet-facing system.

Another issue is software supply chain risk. Modern applications depend on layers of third-party packages, transitive imports, and build-time helpers. Official guidance on supply chain security highlights that vulnerable or malicious dependencies can compromise your own application even if your direct code review looks clean. That means the repository you cloned may only be the visible tip of a much larger trust graph.

There is also a difference between execution and operation. Running a service once proves little. Production use requires durable logging, patch discipline, access boundaries, rollback paths, and predictable recovery. If a repository has none of these traits, deploying it as-is turns your server into a live experiment.

Start with Trust Signals, but Do Not Stop There

Before touching a shell, inspect the repository as if you were reviewing an unknown service boundary. Check whether maintainers are active, whether releases appear coherent, whether issue discussions reveal recurring security or reliability concerns, and whether deployment notes distinguish development from production. A healthy project usually exposes its assumptions. An unhealthy one tends to hide them in scripts, comments, or tribal knowledge.

Look for a clear license, readable installation notes, changelogs, and some evidence that the project is maintained as software rather than published as a code dump. None of those items prove safety, but their absence raises the cost of verification. Projects intended for serious use often document environment variables, required privileges, storage paths, network ports, and upgrade behavior. If those details are missing, you will need to infer them manually from code and startup logic.

Pay attention to suspicious patterns. Examples include one-line install commands that fetch and execute remote content, setup scripts that disable verification, runtime instructions that demand elevated privileges without explanation, or deployment examples that expose services directly to the internet. Security guidance around secure-by-default configurations stresses removing unnecessary functionality, eliminating demo features, and limiting privileges. A repository that normalizes the opposite should be treated carefully.

Read the Project Like an Operator, Not Just a Developer

The fastest way to miss risk is to review only application source and ignore everything around it. Start with the files that define behavior outside the core code path: build manifests, startup scripts, dependency declarations, container recipes, orchestration files, task runners, and environment templates. These artifacts often reveal more about real-world risk than the main application logic.

Focus on questions that map directly to server impact. What user does the process expect to run as? What directories must be writable? Does startup download extra artifacts from uncontrolled locations? Does the application bind to all interfaces by default? Are debug endpoints enabled? Does it attempt to mutate system state, register services, or write into privileged paths?

Container definitions deserve special attention. Community security guidance for containers recommends minimal images, separation of build and runtime stages, removal of unnecessary packages, avoidance of embedded secrets, and reducing special permissions. If a container recipe ships with excess tooling, package managers, shells, and compiler chains in the final runtime image, that is an operational smell. Every extra binary increases the utility of a compromise.

Also review sample configuration files. Development defaults often include permissive host bindings, verbose error output, weak session settings, or temporary credentials. Secure operation requires flipping that posture: strict network exposure, explicit secrets management, and production-grade logging without leaking sensitive material.

Audit Dependencies Before They Audit You

Dependency review is not glamorous, but it is where many failures begin. Public documentation on software supply chain security makes the point clearly: your project inherits the risk of the components it pulls in. That includes direct libraries, nested packages, build plugins, and system modules dragged in during image creation or compile steps.

At a minimum, identify whether versions are pinned, whether lock files exist, and whether install paths are deterministic. Floating versions can quietly change behavior between deployments. Missing lock files make forensic comparison harder. Build scripts that resolve packages differently across environments create invisible drift, which is the enemy of debugging and containment.

Then ask whether the dependency set is justified. Many repositories accumulate tooling that made sense during development but is unnecessary in production. Removing unused packages is not just cleanup; it reduces attack surface and simplifies patching. If the project can run with fewer modules, slimmer images, and fewer interpreters, that is usually the safer path.

Be equally skeptical of post-install and pre-start hooks. These are legitimate mechanisms, but they are also convenient places for hidden behavior. Trace what they invoke, what they download, and what permissions they assume. If you cannot explain the full chain, do not promote the build into a production environment.

Use Isolation as a Design Rule, Not a Last-Minute Fix

One of the most durable security ideas in server operations is least privilege. Application security guidance describes it as limiting users, services, and processes to only the access required for intended function. In plain operational terms, if a service only needs to read one directory, write one log path, and talk to one database endpoint, then that should be its whole world.

That principle should shape deployment architecture. Run the application under a dedicated system identity. Give it access only to required files. Avoid broad host mounts. Do not let a web-facing process read unrelated application data. If a repository needs writable storage, define it narrowly and inspect what it writes there over time.

Isolation also applies at the runtime boundary. If you use containers, treat them as a containment aid, not a magic wall. Container security guidance warns that weak host posture, overbroad permissions, and careless daemon exposure can collapse that boundary quickly. Keep runtime images small, avoid privileged modes, prefer read-only filesystems where possible, and expose only the ports the service truly needs.

If you do not use containers, the same concepts still apply. Separate users, separate paths, separate service definitions, and separate failure domains. A public repository should never gain the ability to wander across your entire machine because setup was rushed.

Build a Staging Path Before Production Ever Sees the Code

Experienced operators know that “works on my laptop” is not a deployment standard. A staging path gives you somewhere to observe process behavior, startup order, outbound requests, filesystem mutations, and resource patterns before the service touches production traffic. This is especially useful when onboarding unfamiliar code from a public repository.

In staging, inspect runtime assumptions aggressively. Watch open ports, check which interfaces are bound, trace external connections, review process trees, and compare expected versus actual file writes. If the application starts helper processes you did not plan for, reaches remote endpoints not documented by the project, or requires broader privileges than claimed, that is exactly the kind of divergence you want to catch early.

Use this phase to validate failure behavior too. Kill the process. Rotate credentials. Restart the service. Fill disk space in a controlled test. Break network resolution. Force bad input into noncritical paths. You are not trying to be destructive; you are trying to answer whether the application fails closed, logs usefully, and recovers predictably.

Staging is also where you freeze your deployment method. Once you know the service can run safely, encode the process so production is reproducible rather than improvised. Manual setup done from memory is where drift and privilege mistakes multiply.

Harden the Network Edge and Internal Access Paths

Many repository guides assume direct exposure for convenience, but internet-facing services should sit behind a controlled edge. A reverse-facing gateway can help terminate encrypted traffic, normalize headers, restrict methods, and hide internal ports. Security references discussing application firewalls and reverse proxy patterns treat this layer as a useful choke point because it narrows how requests reach the app.

That does not mean adding complexity for its own sake. It means reducing unnecessary visibility. If the service listens on an internal port, keep it internal. If an admin route exists, gate it. If the app only needs inbound web traffic and outbound access to a small set of resources, then your packet rules should reflect that reality. Broad exposure is easy; disciplined exposure is what keeps incidents small.

Remote administration needs the same rigor. Restrict management access, separate operator identities from service identities, and avoid reusing credentials across unrelated workloads. If a public project is compromised, segmented access patterns can keep the blast radius contained to one service instead of the whole machine or cluster.

Secrets, Config, and State Need Their Own Threat Model

Many incidents begin with a simple operational shortcut: a secret committed into a config file, copied into an image, or left in an example template. Public code often includes environment samples, but your production values should never live where source, images, or logs can casually expose them. Keep credentials external to the build artifact and inject them through controlled runtime mechanisms.

The same caution applies to state. If the application stores uploads, reports, sessions, or generated assets, understand retention, cleanup, and permission boundaries before launch. Writable paths deserve scrutiny because they become persistence surfaces after compromise. A service that can write almost anywhere can often survive remediation attempts by dropping modified files in unexpected places.

Database or service credentials should be scoped tightly. Guidance on least privilege repeatedly stresses that applications should receive only the permissions their function requires. Read-only means read-only. Write access should target only necessary objects. Administrative capability should remain outside the application runtime entirely.

Logs, Backups, and Rollback Matter More Than Perfect Confidence

No review process eliminates uncertainty. What saves you in practice is observability and recovery. A newly deployed service should produce logs that help answer basic questions fast: what started, what failed, what changed, who accessed which route, and which subsystem emitted the error. Logging should be structured enough for troubleshooting, but restrained enough to avoid leaking tokens, payloads, or private records.

Backups are often discussed as reliability features, yet they are also security controls. If a repository update corrupts state, if an operator makes a bad migration call, or if a compromise forces rebuild and restore, your backup strategy determines whether recovery is a routine operation or a business event. Test restore paths, not just backup creation. An untested archive is wishful thinking with storage attached.

Rollback plans should be explicit. Keep prior deployable artifacts, preserve configuration history, and know how to revert without manually reconstructing the environment. This is where a disciplined GitHub open source deployment process pays off: reproducible build inputs and clean environment separation turn rollback into procedure rather than panic.

Common Mistakes Engineers Still Make

The most common failure is trusting setup instructions more than system boundaries. If a guide says to run everything as an elevated user, many people do exactly that and move on. Another frequent mistake is exposing a service directly because it is easier than placing it behind a controlled edge. Equally common are unpinned dependencies, writable host mounts that are broader than necessary, and production systems that still carry development defaults.

There is also a cultural mistake: treating public code as inherently peer reviewed. In reality, many repositories are functional but operationally immature. They may solve a useful problem and still be poor candidates for direct production use. Engineers who separate code quality from deployment safety make better decisions than those who conflate the two.

A subtler error is neglecting maintenance after first launch. Safe deployment is not a one-time gate. New vulnerabilities appear, dependencies age, maintainers change direction, and undocumented behaviors surface under load. If you adopt a repository, you adopt its lifecycle. That includes update review, rebuild discipline, and periodic permission cleanup.

A Practical Workflow for Safe Adoption

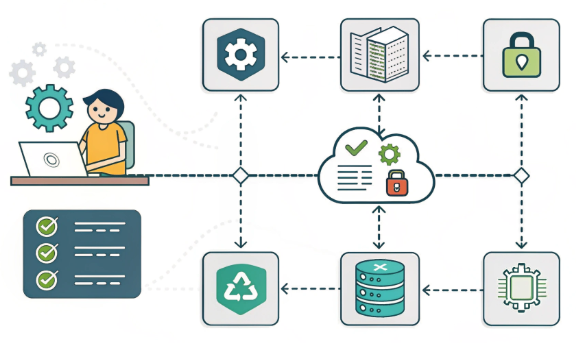

A solid workflow is straightforward even if the execution takes care. First, inspect the repository, release patterns, config files, startup scripts, and dependency definitions. Next, build it in a disposable environment and observe its runtime behavior. Then reduce privileges, isolate filesystem access, narrow network exposure, and externalize secrets. After that, stage the service behind a controlled edge, verify logging and restore paths, and only then consider production traffic.

This workflow is intentionally boring. That is a virtue. Security failures often arrive through novelty, haste, or hidden assumptions. Repeating a clear review path makes unfamiliar code legible. It also helps teams split responsibility cleanly between application review, platform hardening, and ongoing operations.

For teams using hosting or colocation environments, the same logic applies. The substrate may change, but the discipline does not. Whether the workload runs on a single machine, a virtual instance, or a denser infrastructure footprint, safe deployment still depends on verification, isolation, monitored execution, and controlled rollback.

Final Take: Treat Public Code as a Component, Not a Shortcut

Engineers get real leverage from public repositories, but the gain comes from disciplined integration, not blind trust. Review the code like an operator, audit the dependency graph like a skeptic, isolate the runtime like you expect failure, and maintain the service like it belongs to your estate. If you do that, GitHub open source deployment becomes a repeatable engineering practice rather than a gamble disguised as productivity.